Introduction:

In the intricate world of operating systems (OS), the concept of page replacement algorithms plays a pivotal role in managing the dynamic interaction between main memory and secondary storage. Among the myriad challenges faced by these algorithms, Belady’s Anomaly stands out as a paradoxical phenomenon that challenges conventional wisdom. This extensive exploration aims to unravel the intricacies of Belady’s Anomaly, examining its origins, implications, and the profound insights it offers into the complexities of memory management in OS.

I. Understanding Page Replacement Algorithms:

A. Core Functionality:

- Memory Hierarchy: Operating systems rely on a hierarchical memory structure, encompassing registers, caches, main memory (RAM), and secondary storage (typically a hard disk). Efficient management of this hierarchy is critical for system performance.

- Page Replacement: When a process requires data that is not currently in main memory, a page fault occurs, prompting the OS to replace a page in main memory with the required page from secondary storage. Page replacement algorithms dictate the selection of pages to be swapped.

B. Role of Page Replacement Algorithms:

- Optimization Goals: Page replacement algorithms aim to minimize page faults and optimize the utilization of limited main memory resources. Different algorithms prioritize pages based on factors like recency of access, frequency of access, or a combination of both.

- Complexity Trade-offs: The design of page replacement algorithms involves navigating complex trade-offs between simplicity and optimality. Striking the right balance is essential for achieving efficient memory management.

II. Introduction to Belady’s Anomaly:

A. Definition:

- Unexpected Behavior: Belady’s Anomaly refers to the counterintuitive phenomenon where increasing the number of available page frames in main memory may lead to an increase in the number of page faults rather than a decrease, contrary to conventional expectations.

- Historical Perspective: The anomaly was first identified by the Israeli computer scientist Zvi Galil and named after his colleague, Richard M. Karp, who is credited with popularizing the observation in a seminal 1969 paper.

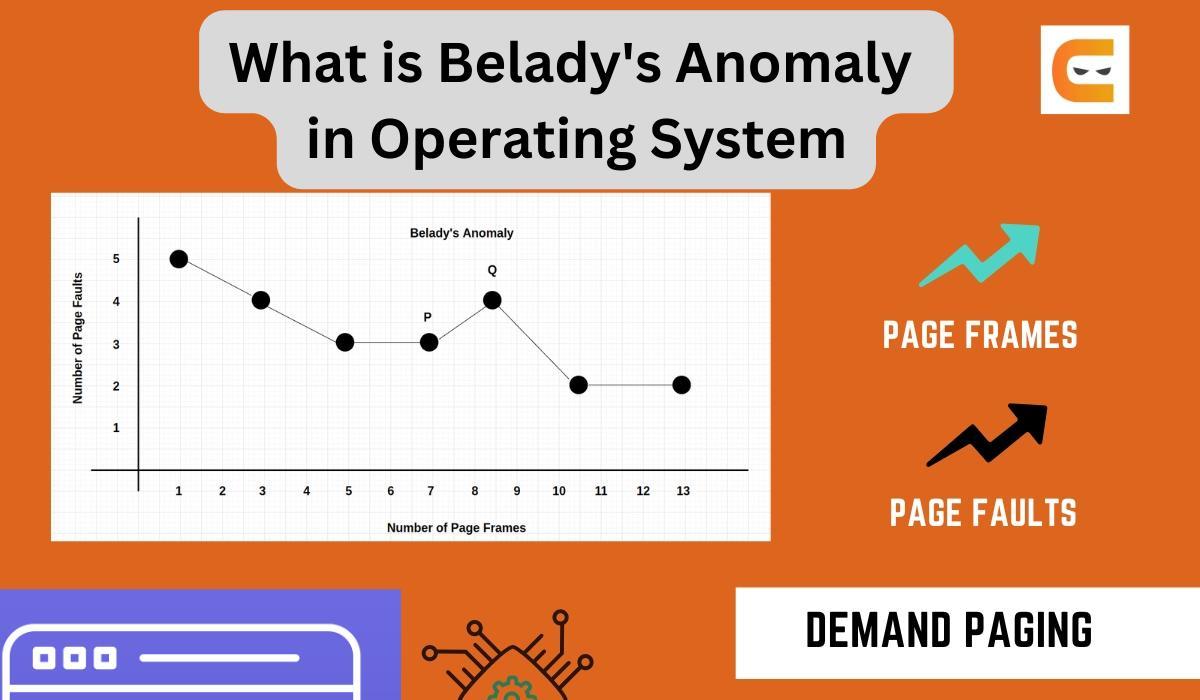

B. Visualizing Belady’s Anomaly:

- Graphical Representation: Belady’s Anomaly is often visualized using graphs that plot the number of page faults against the number of page frames available. In certain scenarios, adding more page frames results in an upward trend in page faults, defying the anticipated trend of diminishing page faults with increased memory.

- Contradicting Intuition: The anomaly challenges the intuitive expectation that more memory should always lead to better performance by accommodating a larger working set of pages.

III. Causes of Belady’s Anomaly:

A. FIFO Page Replacement Algorithm:

- Basic Principle: Belady’s Anomaly is closely associated with the First-In-First-Out (FIFO) page replacement algorithm, a straightforward approach where the oldest page in memory is selected for replacement.

- Lack of Optimality: FIFO, while conceptually simple, does not always provide an optimal solution. The anomaly becomes apparent when FIFO fails to prioritize the retention of pages that will be accessed in the near future.

B. Eviction of Potentially Relevant Pages:

- Overly Conservative: FIFO can be overly conservative in replacing pages, evicting pages that may be imminently accessed again. This lack of adaptability can result in a suboptimal usage of available memory.

- Failure to Adapt: As the number of page frames increases, FIFO may exhibit a failure to adapt, evicting pages that would have remained in memory had the page frame count been lower. This counterintuitive behavior leads to Belady’s Anomaly.

IV. Scenarios Illustrating Belady’s Anomaly:

A. Sequential Access Patterns:

- Linear Memory Access: Belady’s Anomaly is often pronounced when processes exhibit sequential access patterns, where pages are accessed in a linear fashion.

- FIFO’s Rigidity: In scenarios with sequential access patterns, the rigid nature of FIFO leads to premature eviction of pages that are part of the ongoing sequential sequence.

B. Theoretical Examples:

- Hypothetical Situations: The anomaly is most striking in theoretical examples where the memory access pattern is carefully crafted to expose the limitations of FIFO.

- Graphical Representation: Graphs depicting page faults against the number of page frames may exhibit unexpected spikes, challenging the assumption that more memory would always result in fewer page faults.

V. Implications of Belady’s Anomaly:

A. Algorithmic Limitations:

- No Universal Optimal Algorithm: Belady’s Anomaly underscores the absence of a universally optimal page replacement algorithm. Different algorithms excel in different scenarios, and the efficacy of an algorithm can depend on the specific access patterns of processes.

- Practical Relevance: While Belady’s Anomaly is most prominently associated with FIFO, its broader implication is a reminder that the effectiveness of page replacement algorithms is context-dependent and influenced by the nature of the workload.

B. Algorithm Selection Considerations:

- Algorithm Flexibility: The anomaly emphasizes the importance of algorithmic flexibility. Page replacement algorithms that adapt to varying access patterns may outperform rigid algorithms like FIFO in certain scenarios.

- Real-world Impacts: In practical terms, understanding Belady’s Anomaly informs the selection and tuning of page replacement algorithms based on the anticipated characteristics of the workload a system is expected to handle.

VI. Mitigating Belady’s Anomaly:

A. Adaptive Page Replacement Algorithms:

- Embracing Adaptability: To mitigate Belady’s Anomaly, operating systems can employ more adaptive page replacement algorithms that consider factors beyond mere recency or frequency of page access.

- Dynamic Adjustments: Adaptive algorithms dynamically adjust their behavior based on the evolving workload, ensuring a more nuanced response to changing access patterns.

B. Machine Learning Approaches:

- Predictive Modeling: Machine learning approaches can be employed to predict future page accesses based on historical patterns. Predictive modeling allows for more informed decisions in selecting pages for retention or eviction.

- Learning from Data: By leveraging machine learning, operating systems can learn from past behaviors, reducing the impact of anomalies and improving the overall efficiency of memory management.

VII. Real-world Relevance and Research Directions:

A. Practical Implications:

- Application to Modern Systems: While Belady’s Anomaly was identified in the context of early computing systems, its lessons remain relevant in modern computing environments. The principles of adaptive algorithms and contextual memory management continue to shape contemporary OS design.

- Integration with Contemporary Algorithms: The principles highlighted by Belady’s Anomaly have influenced the development and integration of more sophisticated page replacement algorithms in modern operating systems.

B. Ongoing Research:

- Memory Management Advances: Ongoing research in memory management continues to explore novel algorithms and strategies to address the challenges posed by evolving workloads and application characteristics.

- Interdisciplinary Exploration: As computing architectures evolve, interdisciplinary approaches that incorporate insights from areas like machine learning, data science, and cognitive computing contribute to the refinement of memory management techniques.

Conclusion:

Belady’s Anomaly stands as a testament to the complexity inherent in memory management within operating systems. Its unveiling in the early days of computing marked a paradigm shift in understanding the limitations of page replacement algorithms, particularly the inflexibility of FIFO in certain scenarios. While the anomaly itself may be an artifact of specific conditions, its enduring legacy lies in the broader insights it offers into the challenges of optimizing memory usage in the face of diverse workloads. As operating systems continue to evolve, the lessons from Belady’s Anomaly persist, guiding the development of adaptive algorithms and influencing the ongoing quest for efficient memory management strategies in the digital age.